Converting Our Stories Into Multi-Screen Experiences

Storytelling takes many forms. In the past, stories were told orally, with people telling and retelling myths, fables and even histories. As writing technology became more prevalent, we began to record our stories, and we told them in the pages of books. Now, our society is awash in different devices and technologies, and those traditions of spoken stories and printed stories are blurring.

Multi-screen narratives are being told across all kinds of platforms, pages and devices, making for truly immersive experiences. We are watching them, tapping them and learning from them. They immerse us in the storyteller’s world. This article outlines what I believe are the five essentials of telling multi-screen stories.

How I Fell In Love With Interactive Storytelling

First, a little background. My childhood was spent in Nigeria, West Africa. I am a member of the Tiv tribe, a group of about 6 million people clustered in Nigeria’s Benue River Valley. As a child, I heard a lot of Nigerian folktales, about animals, humans and even magic. In Nigerian narrative tradition, stories are often told orally, in front of a gathered audience. During festivals and cultural events, men even dress up in elaborate costumes and perform stories for the crowds. I have vivid memories of these stories and have always been curious about how they could be translated into something digital and interactive.

The Kwagh Hir, or Thing of Magic, my tribe’s largest cultural festival (Image: Naptu2)

Those fables are a piece of my cultural inheritance. They always seemed to contain essential truths about humans. Take the story of the Ear and the Mosquito. One day, the Ear steals food from the Mosquito and refuses to pay it back. In anger, the Mosquito visits the Ear every evening, demanding the food to be returned, annoying Ear all night with his buzzing. It’s an old tale, with many versions, but the moral is consistent: don’t steal from your friends.

Creating modern, interactive versions of these stories is possible, but how exactly do we do that? Let’s begin by talking about what I mean by the word “multi-screen.”

A Bit About Context And Screens

When speaking about multi-screen storytelling, remember that screens have different contexts, not only different capabilities. The same screen on which you carelessly watch videos at home becomes a closely guarded viewport when you’re watching a movie on a crowded train. The context in which people view stories is more important than the device’s specifications. When we tell interactive stories, we need to be aware of this, and embrace it.

I like to focus on the following screens:

- Sensors (Twine, GPS, Arduino, motion detectors, etc.)

- Mobiles and tablets (phones, tablets, laptops and everything in between)

- Flat-screens (desktops, TVs, etc.)

- Public and immersive displays (store kiosks, large stadium screens, projectors, Kinects, etc.)

Not all of these need to be used at the same time, because they won’t all be appropriate to the story you are telling. Context is extremely important.

Now, as promised, here are the five essentials of multi-screen storytelling.

1. Divide Your Story Into Separate Content Blocks

When we create multi-screen narratives, we need to find natural breakpoints in the story, places where the visual or narrative content can easily be separated. This enables us to deliver different segments to different devices, in different contexts.

Kolobok is a Slavic children’s story about the adventures of a round yellow cake. For the Moscow International Festival, a large team of designers and animators from SilaSveta Studio incorporated it into a truly fascinating demonstration of storytelling. Before the show, the team set up a large touchscreen at the children’s height. With their hands, the kids could manipulate parts of the animation by adding movement and color.

A public display for children to play with

For the show itself, the full story was projected onto the facade of a large building, allowing the crowd of adults and their children to watch the narrative unfold. Along with sound, it made for another discrete content block, one that closely resembled a 3-D movie.

The full animated story in front of a large festival crowd

While the touchscreen and the movie were different views of the same content, they could exist as independent pieces and did not have to appear next to each other. The SilaSveta team found the natural breakpoints in its story and created two separate visual experiences to match them.

Questions To Ask

- Where are the breakpoints in the story?

Divide your content so that it makes sense in context. The practice of responsive design gives us numerous guidelines on how to do this. - Can those content blocks exist independently?

Sometimes, the answer is no, but it depends on the story. In the Kolobok example above, they can. In other interactive stories, such as Snow Fall from the New York Times, the blocks are chapters in a single story and should be kept together.

2. Offer People Multiple Perspectives

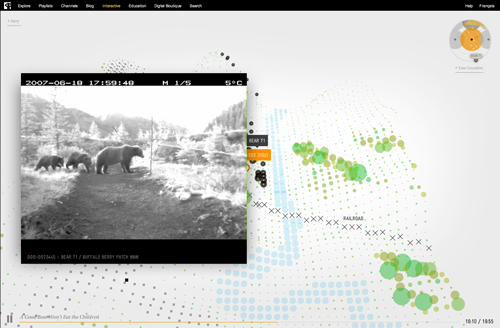

Bear 71 is an award-winning multi-screen experience created by Jeremy Mendes and Leanne Allison. The creators tell the story of a bear living in Banff National Park in Canada. It feels like a cross between a role-playing game and a TV documentary, and as a linear narrative with a non-linear interface, it works beautifully.

The Bear 71 story is told in a highly abstracted interface.

Multiple viewpoints are accessible. Online, you roam in a stylized landscape, watch crittercam footage from the forest, and otherwise live as bears do — freely. Even though it may look like a game interface, you are not so much “playing” as you are participating in a story. Watching real crittercam footage, you see what the forest silently sees. You also have the option to turn on your webcam (“stealth mode”) to see other users around the world, all watching the same story online.

During shows and installations, the team responsible for Bear 71 set up augmented-reality applications and motion-detection cameras, so that visitors could experience what it’s like to have their pictures taken with one. By playing with the augmented-reality apps and the motion-detection cameras at the installations, users got a bit of the same physical experience that the bears had.

Questions To Ask

- Does the narrative change when viewed from a different perspective?

A variety of perspectives can make a narrative much more fascinating. Bear 71 forces us to see the world first from the bear’s perspective and to sympathize with its loss of habitat, but other viewpoints take a slightly different angle. The voyeurism common in our digital sharing culture takes on a different meaning when used for animal surveillance. - What data sets can be used in the narrative?

Bear 71 cleverly combines crittercam video, GPS data, cell tower data, augmented reality, and topographical data. The photographs of visitors to the installation provide an additional emotional layer of data. The data we bring into our stories helps to define additional viewpoints and characters.

3. Redefine A Tradition

As Western culture has moved more deeply into Nigeria, Nigeria’s traditions are weakening. I wanted to take a piece of my culture and put it in the cloud, instead of leaving it locked in the heads of our oral storytellers. That meant redefining how the stories are relayed, how they are saved and, most importantly, what messages they convey to the audience.

In 2011, I started a project named Pixel Fable in which I take those traditional stories and reinterpret them online. In essence, I’m creating an interactive archive of Nigerian stories. As mentioned earlier, the oral histories of Nigerians are rich, but capturing them and translating them into digital stories means they will reach a wider audience. About 25% of my website’s visitors come from the US, while another 25% come from Japan. Canada, France and Germany also send a fair amount of traffic.

Pixel Fable uses responsive websites, iOS apps and augmented-reality animations to reinterpret Nigeria’s oral history.

![]()

An introductory screen from “Cricket and Mud Brick,” a new Pixel Fable story built with the Tapestry app

I’ve relied on two primary contexts to reinterpret the old Nigerian storytelling tradition. The first is people on their tablets and phones, clicking on and reading the stories. The spread of mobile devices makes this inevitable — why not tell African fables in a more accessible context? The second is my attempt to update the moral lessons for our modern age. While the original story of the Ear and the Mosquito may be a funny tale about annoying insects, the lesson can be updated to speak about how mosquitoes spread malaria in Nigeria. There’s room to redefine our old myths for the 21st century.

Questions To Ask

- Will people love or hate the reimagined version?

Not every fable or myth can (or should) be recreated digitally. However, if people have an emotional reaction to a story that you have designed and pushed out to multiple screens, that is usually a good sign. - If people talk about your narrative, will it bring about change in society?

Each Pixel Fable story has a message. Most fables do. Some revolve around love triangles, others around the wisdom of elders, and there’s even one about why you shouldn’t get angry at your friends. They are small messages, but put together, they force us to reconsider how we treat the narrative history of people in Nigeria and West Africa.

4. Immerse People In The Narrative

The Walking Dead, the famous comic and now TV show, used a polling Web app (AMC’s Story Sync, if you like marketing-speak) to ask viewers questions and show related content as an episode was being broadcast on TV. While the app was simply timed to each scene, it was an experiment in multi-screen storytelling that invited audience participation, not just audience attention. Polling has a gimmicky feel to it, but that probably came about as a result of Hollywood pressure and doesn’t reflect the value of the concept in general.

Polling and syncing apps extend narratives from the TV to the couch.

The creators also added mobile gaming to the mix, bringing viewers “into” the story in a completely different way, in different contexts.

All of these facets of each story’s arc enabled people to immerse themselves in this apocalyptic narrative. Jason Spero of Google notes the need for a seamless experience as users move between devices. Other people, however, say that a second-screen experience can be extremely distracting, forcing viewers to miss key parts of the TV show. It is the opposite of an immersive experience, they say, and is confusing to use. In my opinion, each content block should work independently to avoid putting users in this position.

Questions To Ask

- Will people forget where they are?

I’ll be the first to admit that this is not always a good thing. I can’t count the number of times that I’ve almost missed my train stop because my head was buried in my phone. Context, not only device capability, is key. Do you want users to get lost in the story or just engage in a manageable chunk? - Do the screens you have chosen feel natural?

By this, I’m not referring to pixel density. That is simply impossible to control, and if the story if good, it won’t matter anyway. The screens that people choose will depend a lot on the tasks they want to complete, so make your story feel natural for whatever content block they are interacting with.

5. Design Contextual Interactions

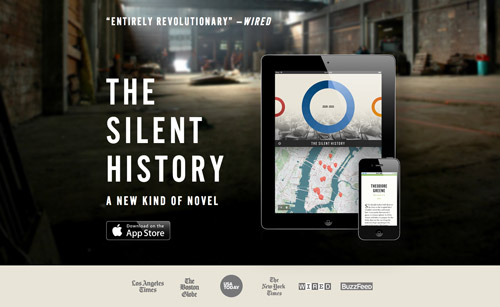

Recently, a number of storytelling apps have relied on location to serve additional content, much in the same way that Foursquare or Google Maps do. The Silent History is a dystopian science-fiction story about children who do not speak. The iPad app contains the whole story, but by visiting certain geo-tagged locations, users can access additional content.

For a novel about children all over the US, inviting readers to physically go to where the story’s kids are makes perfect sense. The additional contextual interaction makes the story more layered and thought-provoking, in a way that a simple app would not be.

We use map data every day, to look for restaurants, check the weather, see road conditions and even check for public transit delays. Other contextual interactions make sense when creating digital stories: taking photographs, texting, sharing and saving information, even body motion. Use these, along with your UI and UX skill sets, to devise new storytelling methods.

Questions To Ask

- Does the device matter?

With the rise of responsive design workflows, our content should not be device-dependent. Some things, however, such as camera or GPS functionality, may be integral to a part of your story, and so the device would need to be factored in. - Should the interface be designed as a seamless part of the narrative?

As people who work on the Web, we really have a strength here. If we choose to make the interface part of the story, then we can rely on our experience in building websites and content management systems. - Will your story “remember” anything?

As a child, I folded over the top corner of the page when I had to put a book down. It was a simple way to keep my place. With a narrative split across multiple devices, it might be necessary to design an interaction that flags where you’ve gotten to and then returns you there when you visit again. That all depends on the content, but the question does need to be asked. Everyone hates losing their place on the Internet and having to navigate back, so perhaps we should enable a memory in our stories as well.

Conclusion

We have conceptualized different uses of multiple screens to tell stories. All of us, from every corner of the globe, have intensely rich cultures filled with stories and fables. Using them to create interactive narratives is another way to explore the power of the Web, to wow people and to record our cultural history.

I would love to see what you come up with, or hear about other examples of clever digital storytelling.

Further Reading

- Better User Experience With Storytelling

- The Art Of Storytelling Around An App

- Trends In Storytelling

- Behind The Scenes Of Nike Better World

See User Testing Live

See User Testing Live

Custom Web Forms for Angular, React, & Vue. Your backend.

Custom Web Forms for Angular, React, & Vue. Your backend. Celebrating 10 million developers

Celebrating 10 million developers