What Every App Developer Should Know About Android

In today’s fast-paced mobile market, consumers have no patience for mobile apps that compromise their experience. “Crashes” and “Not working” are the most common feedback on Google Play for unstable or sluggish apps (including games). lousy apps.

Those comments and ratings make hundreds of millions of potential downloaders skip those lousy apps. Sounds harsh, but that’s the way it is. An app succeeds not by chance. It is the result of the right decisions made at the right time. The most successful mobile app developers understand the importance of performance, quality and robustness across the array of mobile devices that their customers use.

Examples abound of just how easily a developer can go very, very wrong with their app. An app can behave differently on a variety mobile devices, even ones running the same OS version and identical hardware components.

Recommended reading: Mobile Best Design Practices For Android

During Q1 of this year (1 January to 31 March), we gathered a significant amount of data from Mobile App Testing on tests done by many mobile app and game developers. Testdroid Cloud is an online cloud-based platform for mobile developers to test that their apps work perfectly on the devices that their customers use.

During this period, over 17.7 million tests were run on 288 distinct Android hardware models. To be clear, different versions of some popular models were tested but are counted in the data as one distinct device (such as the Samsung Galaxy S4 GT-i9505 running 4.2.2, API level 17). Some popular devices also had different versions of a single OS, such as the Google Nexus 7 ME370T with Android OS version 4.1.2 (API level 16), 4.2.2 (API level 17) and 4.3 (API level 18).

All tests were automated, using standard Android instrumentation and different test-automation frameworks. In case you are not familiar with instrumentation, Android has a tutorial that explains basic test automation. Also, the tests caught problems through logs, screenshots, performance analysis, and the success-failure rate of test runs.

Note: The data includes all test results, from the earliest stage (=APK ready) to when the application gets “finalized.” Therefore, it includes the exact problems that developers encountered during this process.

The goal for this research was to identify the most common problems and challenges that Android developers face with the devices they build for. The 288 unique Android device models represent a significant volume of Android use: approximately 92 to 97% of global Android volumes, depending on how it gets measured and what regions and markets are included. This research represents remarkable coverage of Android usage globally, and it shows the most obvious problems as well as the status of Android hardware and software from a developer’s point of view.

We’ll dive deep into two areas of the research: Android software and hardware. The software section focuses on OS versions and OEM customizations, and the hardware section breaks down to the component level (display, memory, chipset, etc.).

Recommended reading: Bringing Your App’s Data To Every User’s Wrist

Android Software

Your app is software, but a lot of other software is involved in mobile devices, too. And this “other” software can make your software perform differently (read “wrong”). What can you do to make sure your software works well with the rest of the software in devices today? Let’s first look at some of the most common software issues experienced by app developers.

Android OS has been blamed for platform fragmentation, making things very difficult for developers, users and pretty much every player in the ecosystem to keep up. However, that is not exactly the case. The OS very rarely makes things crack by itself — especially for app developers. More often it also involves OEM updates, hardware and many other factors — in addition to the OS.

One reason why Android is tremendously popular among mobile enthusiasts and has quickly leaped ahead of Apple’s iOS in market share is because it comes in devices of all shapes, prices and forms, from tens of different OEMs.

Also, the infrastructural software of Android is robust, providing an excellent platform with a well-known Linux kernel, middleware and other software on top. In the following sections, we’ll look at the results of our research, broken down by OS version, OEM customizations and software dependencies.

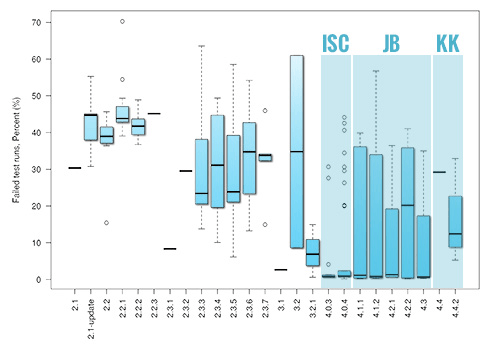

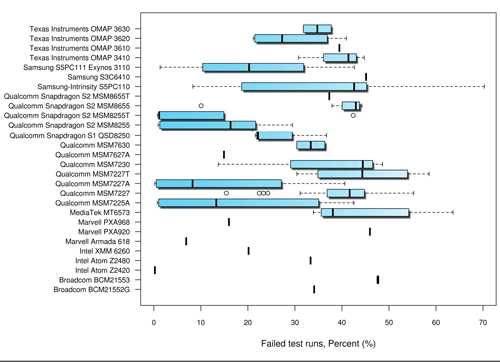

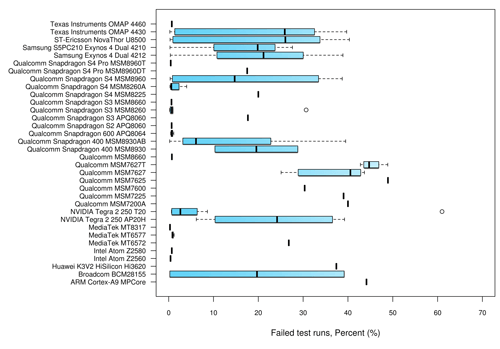

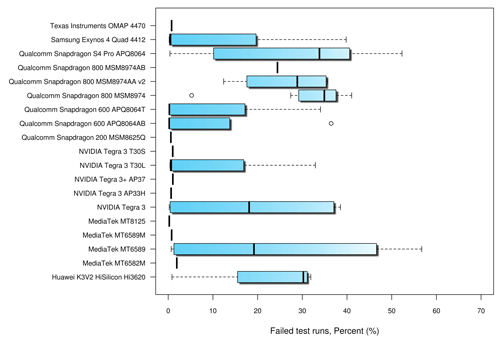

How To Read The Graphs

The findings from this study are plotted on graphs. In those graphs, the dark black line is the median (50%) of the failure percentage of different devices in that group. The lines above the bars mark the upper quartile (75%), and the lines below mark the lower quartile (25%). The dashed lines are the maximum (top or right) and minimum (bottom or left). The circles represent outliers.

OS And Platform Versions

We’ll start with the most important factor in the problems experienced by app developers: Android OS versions. This dramatically affects which APIs developers can use and how those APIs are supported in variety of devices.

Many developers have experienced all of the great new features, improvements and additions that Android has brought version by version. Some late-comers have played with only the latest versions, but some have been developing apps since the early days as days of Cupcake (1.5) and Doughnut (1.6). Of course, these versions are not relevant anymore.

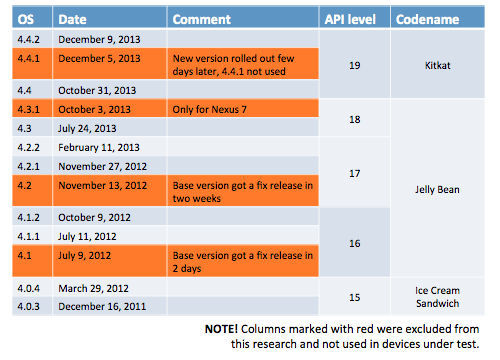

The following table shows the release dates for the various OS versions (along with their code names) and notes why certain versions were excluded or were not used on the devices being tested.

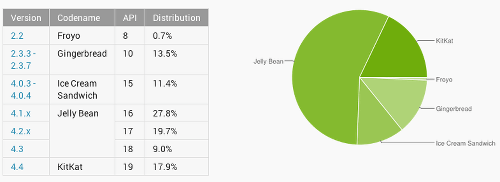

The most typical situation with app developers who want to maximize support for all possible variants is that they’ll start testing from version 4.0 (Ice Cream Sandwich, API level 14). However, if you really want to maximize coverage, start from version 2.3.3 (Gingerbread, API level 10) and test all possible combinations up to the latest release of Kit Kat (currently 4.4.4 and, hopefully soon, Android L). Versions older than 4.0 still have serious use — and will continue to for some time.

When it comes to the latest versions of Android — and let’s count Ice Cream Sandwich (4.0.3 to 4.0.4), Jelly Bean (4.1.1 to 4.3) and Kit Kat (4.4 to 4.4.2) among them — Ice Cream Sandwich is clearly the most robust platform. OEM updates were obviously the least problematic on this version, and the majority of tested apps worked very well on API level 15 (4.0.3 to 4.0.4).

The upgrade to Jelly Bean and API level 16 didn’t significantly introduce new incompatibility issues, and the median failure percentage remained relatively low. However, API level 16 had many outlier cases, and for good reasons. For instance, more problems were reported with Vsync, extendable notifications and especially with support for the lock screen and home screen rotation.

API level 17 brought improvements to the lock screen, and this version was generally stabile up to version 4.2.2. The failure rate went up when the majority of OEMs introduced their updates to this version. Apparently, it became more problematic for users than previous versions.

Perhaps most surprisingly, the failure rate went up when Kit Kat API level 19 was released. The average failure percentage reached nearly the same level as it was with Gingerbread. Google patched Kit Kat quite quickly with two releases (4.4.1 and 4.4.2). Of those, 4.4.2 seemed to live much longer, and then the 4.4.3 update came out more than half a year later.

Key Findings From OS Versions

- On average, 23% of apps behave differently when a new version is installed. The median error percent was the smallest with Ice Cream Sandwich (1%), then Jelly Bean (8%), Honeycomb (8%), Kit Kat (21%) and, finally, Gingerbread (30%). This is actually lower than what was found in the study conducted with Q4 data (a 35% failure rate).

- Despite old Android versions being used very little and Gingerbread being the oldest actively in use, some applications (40% of those tested) still work on even older versions (below 2.2). In other words, if an app works on version 2.2, then it will work 40% of the time in even older versions as well.

- Over 50% of Android OS updates introduced problems that made apps fail in testing.

- Testing failed 68% of the time when 5 apps were randomly picked out of 100.

- The average duration of testing was 57 cycles per platform. Old versions were tested less than new ones: Gingerbread (12 test cycles), Ice Cream Sandwich (17), Jelly Bean (58) and Kit Kat (95).

- An average testing cycle reduced 1.75% of bugs in the code overall.

Note: A test cycle constitutes an iteration of a particular test script executed in one app version. When an app is changed and the test remains the same, that is counted as a new test cycle.

Tips And Takeaways

- For maximal coverage either geographically or globally, using as many physical devices as possible is recommended. Track your target audience’s use of different OS versions. The global status of versions is available on Google’s dashboard.

- All OS versions above 2.3.3 are still relevant. This will not likely change soon because users of Gingerbread and Ice Cream Sandwich represent nearly one quarter of all Android users, and many of them do not update (or would have done so already).

- If you want to cover 66% of OS volume, then testing with Kit Kat (4.4.x) and Jelly Bean (4.1 to 4.3) is enough (covering API 16 to 19).

- To cover 75% of OS volumes, then test from version 4.0.3 (API level 15).

- We recommend testing the following devices to maximize coverage:

- Amazon Kindle Fire D01400 — 2.3.4

- HTC Desire HD A9191 — 2.3.5

- Huawei Fusion 2 U8665 — 2.3.6

- Sony Xperia U ST25i — 2.3.7

- Asus Eee Pad Transformer TF101 — 4.0.3

- LG Lucid 4G — 4.0.4

- HTC One S X520e — 4.1.1

- Motorola Droid XYBOARD 10.1 MX617 4.1.2

- Acer Iconia B1-A71 — 4.2

- BQ Aquaris 5 HD — 4.2.1

- HTC One mini M4 — 4.2.2

- Samsung Galaxy Note II GT-N7100 — 4.3

- LG Google Nexus 5 D821 — 4.4

- HTC One M8 — 4.4.2

Note: These devices were selected because they are a good base to test certain platform versions, with different OEM customizations included. These devices are not the most problematic; rather, they were selected because they provide great coverage and are representative of similar devices (with the same version OS, from the same manufacturer, etc.).

OEM Customizations

One stumbling block with Android — like any open-source project — is its customizability, which exposes the entire platform to problems. What is called “fragmentation” by developers would be considered a point of differentiation for OEMs. In recent years, all Android OEMs have eagerly built their own UI layers, skins and other middleware on top of vanilla Android. This is a significant source of the fragmentation that affects developers.

In addition to UI layers, many OEMs have introduced legacy software — tailored to Android — and it, too, is preventing developers from building their apps to work identically across different brands of phones.

Drivers also cause major problems, many related to graphics. Certain chipset manufacturers have done an especially bad job at updating their graphics drivers, which makes the colors in apps, games and any graphic content inconsistent across phones. Developers might encounter entirely different color schemes on various Android devices, none close to what they intended.

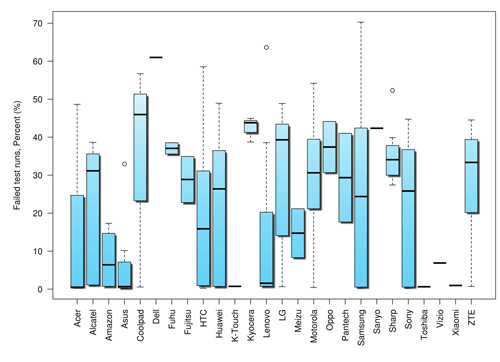

Key Findings Related To OEM Customizations

- No surprise, Samsung devices are among the most robust and the most problematic. For example, Galaxy Nexus GT-I9250 is one of the most robust devices in all categories, while the Samsung Infuse 4G SGH-I997 failed the most in those same categories.

- Asus devices, along with Xiaomi devices, are the most robust. Xiaomi implements Android differently, however; for instance, pop-ups make the controllability of some devices impossible.

- Coolpad has, by volume, the most problems. Among the biggest brands, HTC has the least error-prone devices.

- All of the big brands — HTC, Samsung, Sony and LG — have customizations that are problematic for certain types of applications. For example, Sony’s customizations breaks some basic functionality, such as viewing PDFs. Samsung’s customizations has problems with taking photos with the camera and interrupting calls.

Tips And Takeaways

- The most common misconception is that Nexus devices are the best for testing. Those devices typically have the latest OS version and little to no OEM customization.

- Pay attention to carrier- and operator-branded devices as well. Some of them implement Android totally differently, regardless of the name of the device or brand.

Dependencies On Other Software

Some applications require access to other apps. For example, many apps and games incorporate social media, and in the worst implementations, developers have assumed that every device integrates popular social media apps. Some devices come with those social media apps preinstalled, but if not, then your application just won’t work properly. Unfortunately, problems with software dependency turn into problems for app developers.

Key Findings Related To Dependencies On Other Software

- 92% of apps integrate with the given platform to show ads.

- 65% of apps integrate with at least one social media platform.

- 48% of apps integrate with at least two social media platforms.

- 33% of apps integrate with at least three or more social media platforms.

Tips And Takeaways

Check whether the software that your application depends on is installed on all devices. Do not assume that all of those third-party apps and other software exist on every device!

Android Hardware

The Android device eco-system continues to grow and evolve. Many handset manufacturers continue to churn out devices with amazing specifications and hardware and with different form factors. Naturally, the sheer number of possible device configurations presents a challenge to developers. Ensuring that an application works well on the widest range of devices is crucial and is the easiest way to avoid frustrating end users.

Most developer carefully weigh the pros and cons of testing on emulators and testing on real devices to follow the right strategy. Typically, emulators are used in initial stages of development, while real devices are brought in later in the game. Your choice of platform on which to build your next big thing should be as honest as possible — from day one. In our experience, that is a cornerstone of creating a successful app — and gaining those hundreds of millions of downloads.

Screen Resolution, Display And Colors

The key to success with any app — especially games — is to get the UI and graphics right. This is a challenge because there are a lot of different resolutions, a lot of ways to present content and, naturally, a lot of different hardware.

With new high-end devices gaining popularity among users, the Android eco-system seems to be quickly headed towards high-resolution displays. High-resolution screens obviously make for a better user experience, but to take advantage of this, developers need to update their apps and test for them.

However, display resolution is not always the cause of headaches for developers. In fact, applications fail more often because of screen orientation, density, color and the overall quality of the device’s screen. For example, many games suffer from poor-quality displays. For instance, a button in a certain part of the UI might get shown in a different shade than the one intended by the designer — or, in some cases, a totally different color.

This is a common issue. It can result not only from hardware components, but also when display drivers are implemented incorrectly. Graphical content could even be invisible in some apps because of color brightness or low pixel density. This kind of failure is apparent from screenshots in our tests.

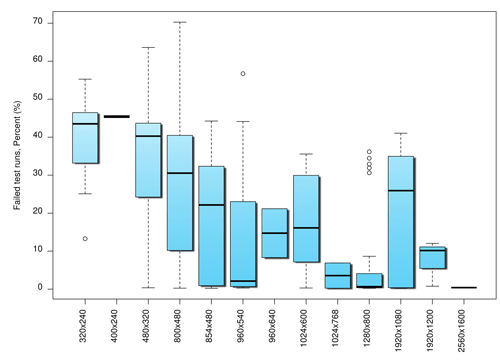

No surprise that as the display resolution gets higher, apps present fewer problems. There are a few exceptions to this, but they can be attributed to how certain OEMs use various resolutions in both low- and high-end devices.

The Android OS provides a environment to develop apps consistently across devices, and it handles most of the work of adjusting each application’s UI to the given screen. Also, it comes with APIs that enables the developer to optimize an application’s UI for a particular screen size or density. For example, you might want one UI for tablets and another for handsets. Although the OS scales and resizes to make an application work on different screens, you should still optimize your application for different screen sizes and densities. In doing so, you’ll improve the user experience on all devices, and users will believe that your application was designed for their particular device, rather than simply stretched to fit their screen.

Key Findings Related To Screen Resolution

- The median of error was the smallest in resolutions of 2560 × 1600 pixels (0.4%), then 1280 × 800 (0.7%) and 1280 × 720 pixels (1.5%). It was highest in resolutions of 400 × 240 (45%) and 320 × 240 pixels (44%).

- The most typical problem relates not to resolution or the scaling of graphical content, but to how content adjusts to the orientation of the screen. According to our data, problems with screen orientation are 78% more likely than problems with the scaling of graphical content.

- Wrong color themes and color sets were reported in 18% of devices, while 24% of apps appeared correctly on all 288 different devices.

- In some cases, an Android OS update or OEM update fixed a display issue, but the percentage was relatively low (6%).

- Apps worked nearly the same on high- and low-end devices (based on chipset) with the same resolution (just a 2% difference).

- In general, the bigger a device’s display, the more likely an app will perform better.

Tips And Takeaways

- Design graphical content for multiple screen sizes, but make it scale to different sizes automatically. This is strictly a design issue.

- Many problems with resolution, display and color can be avoided by designing an application correctly in the first place and by testing it thoroughly on real Android devices. Emulators won’t yield reliable results or lead to a robust application.

Memory

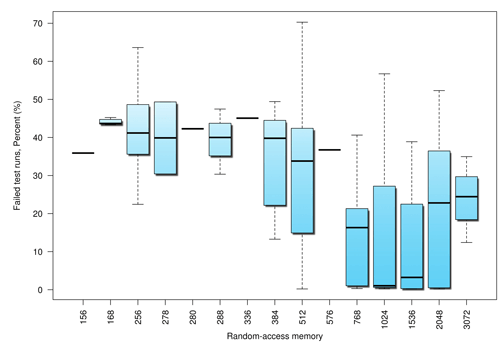

Anything can happen when an Android device runs out of memory. So, how does your application work when a device is low on memory?

Developers deal with this problem rather often. Many apps won’t run on certain Android devices because they consume too much memory. Typically, the most popular apps — ones rated with four and five stars — do not have this problem because memory management has been implemented the right way, or else they have been excluded from being downloaded on low-end devices entirely. Too many of today’s apps were originally developed for high-end devices and can’t be run on low-end devices. What is clear is that you can easily tackle this problem by checking how your app works with different memory capacities.

Memory seems to significantly affect how a device copes with an application. If a device’s RAM is equal to or less than 512 MB, then the device will crash 40% of the time when certain applications are running.

If a device’s RAM is higher than 512 MB, then the device will crash approximately 10% of the time. That is statistically significant.

Key Findings Related To RAM

- The median of error was lowest with RAM of 1024 MB (1%), then 1536 MB (3%) and next 768 MB (16%). It was highest with RAM of 336 (45%) and 168 MB (44%).

- Approximately 10% of apps run on devices with more than 512 MB crash due memory-related issues.

- Many OEMs do not give their devices the 512 MB of RAM that Google recommends as the minimum. Such devices are 87% more likely to have problems than devices with more memory.

- The probability of failure drops 41% for devices that contain more than 576 MB of memory.

Chipsets

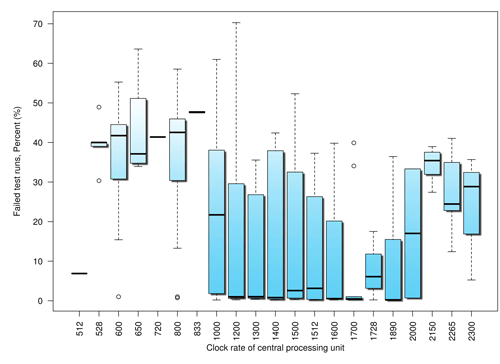

The difference in performance between silicon chipsets (CPU, GPU, etc.) is pretty amazing. This is not necessarily obvious to end users. Some people pay too much attention to the CPU’s clock rate, rather than the chipset and other factors that affect the performance of the device.

Imagine developing something that is targeted at high-end devices but that runs on low-end hardware. It simply wouldn’t work. The user experience would obviously suffer because a high-end app running on a low-end chipset with a low clock-frequency rate would suffer in performance. Many apps suffer severely because the activities on screen are synced with the clock, and the UI can’t refresh quickly enough to keep up with it. For users, this translates into poorly implemented graphics, blinking screens and general slowness.

Let’s first look at the clock rate of devices that we tested.

To compare chipsets more easily, we categorized them based on their number of cores: single, dual and quad.

Unlike the CPU architecture in chipsets — which is manufactured primarily by ARM — the graphics portion is manufactured by multiple vendors, which gives some semiconductor companies the flexibility to pick and choose which GPU goes best with the CPU in their chipsets.

Back in the day, the primary job of the graphics card was to render high-end graphical content and 3-D images on the screen. Today, GPUs are used for much more than games and are as crucial as the CPU, if not more so.

Today, all of the recent Android OS versions — Ice Cream Sandwich, Jelly Bean, Kit Kat and so on — rely heavily on the GPU because the interface and all animations are rendered on it, which is how you’re able to achieve transition effects that are buttery smooth. Today’s devices have many more GPU cores than CPU cores, so all graphics and rendering-intensive tasks are handled by them.

Key Findings Related To Chipset

- Single core. The median of error was lowest in Intel Atom Z2420 (0.2%), then in Qualcomm Snapdragon S2 MSM8255T (1.1%).

- Dual core. The median of error was lowest in MediaTek MT8317 (0.3%), then in Intel Atom Z2560 (0.4%).

- Quad core. The median of error was lowest in both MediaTek MT8125 (0.2%) and Qualcomm Snapdragon 600 APQ8064AB and APQ8064T (0.2%).

- Many high-end devices are updated to the latest version of Android and get the latest OEM updates, which makes them more vulnerable to problems (67%) than low-end devices with the original OS version.

- Clock rate doesn’t directly correlate with performance. Some benchmarks show significant improvement in performance, even when the user experience and the apps on those chipsets and devices don’t improve significantly.

- Power consumption is naturally a bigger problem in some batteries, and some devices run out of battery life quickly. However, this is tightly related to the quality and age of the battery and cannot be attributed solely to the chipset.

Tips And Takeaways

- Thoroughly test your application on all kinds of devices — low end, mid-range and high end — if it has heavy graphics (such as apps with video streaming) or uses the GPU heavily, to ensure maximal performance across an ecosystem.

- Do not assume that your app works across different chipsets. Chipsets have a lot of differences between them!

Other Hardware (Sensors, GPS, Wi-Fi, etc.)

Misbehaving sensors — and we’re not talking about sensors that are poorly calibrated or that cannot be calibrated — cause various problems in games that take input from the way the user handles the device. With GPS, the known problems are navigating indoors and not being able to reach satellites from some locations. To take a similar example with media streaming, video that is meant to be viewed in landscape mode might work well in landscape mode, but the user would have to rotate the device 180 degrees to see it right. Frankly, there is no way to properly test orientation with an emulator; also, things related to the accelerometer, geo-location and push notifications cannot be emulated or could yield inaccurate results.

Network issues come up every now and then, and a slow network will very likely affect the usability and experience of your application.

Conclusion

In this article, we’ve focused on two areas — Android software and hardware — and what app developers need to know about both. The Android eco-system is constantly changing and new devices, OS versions and hardware come out every week. This naturally presents a big challenge to application developers.

Testing on real devices prior to launch will make your app significantly more robust. In the next article, we will dive deep into this topic, covering the most essential testing methods, frameworks, devices and other infrastructure required to maximize testing.

How do you test mobile apps, and what devices do you use and why? Let us know which aspects of mobile app testing you would like us to cover in the next article.

Further Reading

- How To Improve Test Coverage For Your Android App Using Mockito And Espresso

- How We Optimized Performance To Serve A Global Audience

- Touch Design For Mobile Interfaces: Defining Mobile Devices (Excerpt)

- The Ultimate Guide To Push Notifications For Developers

Register for Free

Register for Free

Celebrating 10 million developers

Celebrating 10 million developers Custom Web Forms for Angular, React, & Vue. Your backend.

Custom Web Forms for Angular, React, & Vue. Your backend.