Privacy Guidelines For Designing Personalization

For interaction designers, it’s becoming common to encounter privacy concerns as part of the design process. Rich online experiences often require the personalization of services, involving the use of people’s information.

Because gathering information to personalize a customer experience can interfere with the overall experience — with negative consequences for the business — how do we navigate this increasingly difficult territory? What are the guidelines to follow when using data to personalize digital experiences, and how can organizations help people feel comfortable with personalization services that research clearly shows people want?

People Trade More Personal Information Now Than Ever Before

Exchanging personal information for things we want, whether physical or virtual, is nothing new. Long before the Internet, we freely published our home phone numbers and addresses in public directories. Remember the white pages? While they connected people and businesses in the same region, they opened the door to unwanted telephone marketing and junk mail.

People exchange privacy and personal information for social goods all the time — the trade just needs to be perceived as being worthwhile. In order to function properly, our civic and judicial systems, for example, require the use of our personal information, which routinely becomes public record.

In May 2014, Accenture Interactive published an online survey conducted among participants between 20 and 40 years of age:

- 80% believe that total privacy in the digital world is a thing of the past.

- Only 64% are concerned that websites track their behavior to present content that matches their interests, a drop from 85% just two years prior in 2012.

- Only 30% feel vendors are transparent enough with how they use personal data.

- 64% are willing to be tracked in a physical store to receive a text message with a relevant offer.

These findings reveal an interesting story: While digital consumers broadly accept the loss of privacy in the digital space to receive personalized content, they are wary of how businesses store their information and don’t fully trust how that information is used.

In considering what might discourage customers from sharing personal information, we should bear in mind the difference between privacy and security. While both may give us reason to be cautious, security has to do with a business’ ability to protect data from hackers, whereas privacy involves how customers are protected from businesses misusing their data. One represents an internal threat, the other external.

How People Currently Trade Privacy For Personalization

Social Networks

What we trade: Social networks are where we trade most. We disclose demographic data such as gender, age, address and birthplace, and share our preferences for music, food, brands and even friends. Everything can be tracked — the amount of time we spend online, our activities and the types of content we consume and create, revealing valuable behavioral information and insight into our psychology.

What we receive: We gain access to a wide range of services offered by social networks: including keeping in touch with loved ones, finding things we like, playing games, establishing business networks and seeking employment.

Example: Pinterest is one of the best examples of content personalization. As Kevin Jing, engineering manager on the visual discovery team at Pinterest, explains on their engineering blog, “Discovery on Pinterest is all about finding things you love, even if you don’t know at first what you’re looking for.”

Requiring a high degree of personalization, Pinterest recommends content based on deep learning in the form of object recognition. Called visual search, the technology finds and displays visually similar images to increase user engagement, opening the door for intelligently targeted ads and greater monetization of the platform.

E-Commerce

What we trade: By interacting with custom filters and allowing our activities and purchases to be recorded, we share preferences for product features and our buying habits. When we connect our social networks, information about friends and colleagues provide an additional layer of useful data. After all, we often buy what those in our social groups buy.

What we receive: For consumers, the purpose of e-commerce is to find and acquire products we want. The deeper the understanding a seller has of our psychology, the better the product placement. E-commerce personalization reduces friction around finding the things we like and makes those things easier to purchase.

Example: Consider Bombfell, clothing service for men. After entering your height, weight, body shape, skin tone, clothing size, favorite brands and other preferences, Bombfell becomes your personal fashion advisor and personal shopper.

As Bombfell explains in its FAQ, “We use technology on the back-end that surfaces recommendations for each user based on fit and style. But a human stylist (the sort with years of experience working in production, design and merchandising in men’s fashion) has the final say.”

Public Services

What we trade: Beyond normal census information, we provide public services with more preferences and behavioral data than ever before. And it’s not just consumption rates and patterns, but our preferences for the services themselves.

What we receive: From municipal services like utilities, taxation and legal support to tourism and travel tips, sharing our personal information enables providers to offer efficient access to these and many other essential services. Because the needs of individual citizens are unique, there is much to gain from personalization.

Example: Government portals like the one used by the state of Indiana are becoming more commonplace. Called my.IN.gov, the service is “a personalized dashboard that empowers the user to create a unique content experience.”

By setting up an account — and connecting social networks — citizens gain access to a customizable dashboard including personalized services, maps, local information and travel advisories. They can show and hide tiles of interest, indicate favorite services, manage newsletter subscriptions and even view interactive maps with rich state-wide statistical data.

The US federal government is not far behind, with its portal launching soon.

Internet Of Things

If we look to the future, there is a growing trend of exchanging privacy for personalization in the emerging space known as the Internet of Things (IoT). Designing for user experiences in this space goes beyond purely digital interactions to ones that include ecosystems of physical and digital services.

Disney’s MyMagic+ is the platform that supports its Magic Band, a device using RFID technology to enable guests to authenticate and unlock a wide range of benefits, including gaining admission to the park and making purchases. Similar to mobile devices, the Magic Band tracks and communicates a customer’s location as they move within the park. By aggregating personal preferences, past activity and location data, the MyMagic+ platform provides guests with frictionless and highly personalized experiences when staying at the resort. As Disney states, “Aggregate information can be used to better understand guest behavior and make improvements to the guest experience (e.g., managing wait times and improving traffic flow).”

With connected devices capable of collecting vast amounts of consumer data, opportunities for the personalization of services are almost endless. A number of key players in the IoT space are competing to provide customers with intelligent virtual assistants for connected home services.

Amazon’s Echo promises integration between a number of connected services and uses audio sensors backed by ever-learning artificial intelligence. Always on, it awaits your command to start listening to you and your home environment. The result is an intelligent system that learns to anticipate needs and delivers a great degree of control over connected devices.

It’s easy to see the significant risk to privacy represented by this type of always-on service. Given that audible activities in your home are captured and sent to the cloud for processing, third parties with access to the cloud (legally or not) could potentially access your data.

The following excerpt from Disney’s privacy policy captures the many potential benefits to users from personalization within the Internet of Things, and also the considerable risks to privacy:

“MyMagic+ provides an even more immersive, personalized and seamless Walt Disney World Resort experience than ever before. If you choose to participate in MyMagic+, we collect your information from you online and when you visit the Walt Disney World Resort. We value our guests and are dedicated to treating the information you share with us with care and respect.”

Three Guidelines For The Use Of Personal Data

The following guidelines capture the key considerations we should keep in mind.

- Get informed consent.

- Make fair trades.

- Give the customer ongoing control over their data.

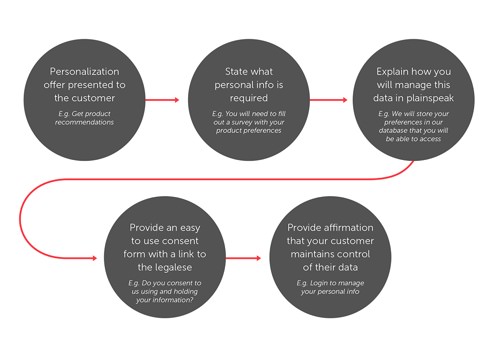

Get Informed Consent

Taking something of value from someone else without their consent is… yup, stealing.

And someone agreeing to have their personal information used, if they are not adequately informed, does not imply consent. Many people do not understand the full implications of providing their personal information.

The legal guidelines for collecting and using personal data are well established; however, they don’t require an educational component — the onus is currently on users to understand the legalese.

People should always understand the nature of the trade. If they don’t, they will figure it out eventually and either love or resent you for how the exchange was managed.

Make it easy to understand the facts about how data is used and stored. Long type-heavy pages don’t accomplish this. Hiding information away does not count either. This is where UX design plays an important role. The interface should promote understanding and make information accessible.

The Government of Canada’s “Guidelines for Online Consent” is a good model to follow. It states that consent must be meaningful and that individuals should “understand what they are consenting to.”

Make Fair Trades

Give people good value. This is just good customer service. Making fair trades with people builds trust with users, strengthens your brand and is an effective long-term strategy.

There are significant risks with being perceived as unfair, even if that perception is wrong. According to research (PDF) published in 2002 by NPG, most people will punish perceived unfairness when given the chance, even when there is no immediate payoff for them. The negative emotions that arise from unfairness provide ample motivation for customers to take action.

Netflix suffered such “altruistic punishment” from customers in 2011, when it lost a million customers in a single month as a result of perceived unfairness from its unbundling of its DVD and live-streaming service.

Crucially, customers tend to infer bad motivation when asked for more without receiving greater value in return. To gain trust, clearly show users the value you offer as a result of using their personal information. This will mitigate the risk that you are perceived as being unfair and any resulting altruistic punishment that may ensue.

Give Ongoing Control To The Customer

Remember that personal information is not yours to keep. It’s still the user’s data, so give them control over it.

This has two implications:

- Make it easy for users to manage their personalization preferences.

- Allow users to easily take back their data when they no longer want you to have it.

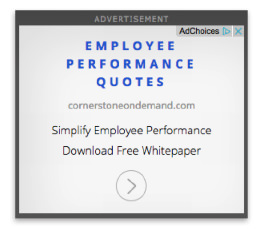

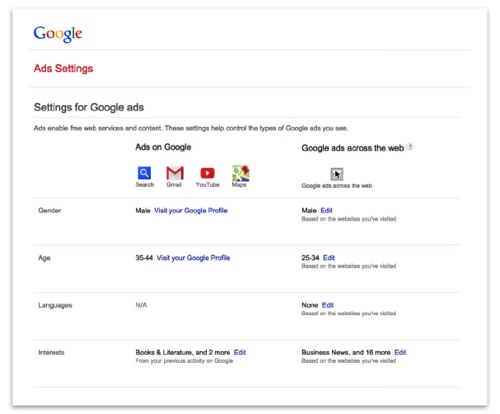

Google ads allows users to adjust their display ad preferences. The two buttons located in the top-right corner of display ads represent two different methods for users to take control.

Clicking the “x” hides the ad immediately, prompting the user to specify why the ad is not relevant and listing the choices: inappropriate, irrelevant or repetitive. Presumably, the feature enables Google to serve more relevant content in the future by allowing users to indicate which ads they don’t like and why.

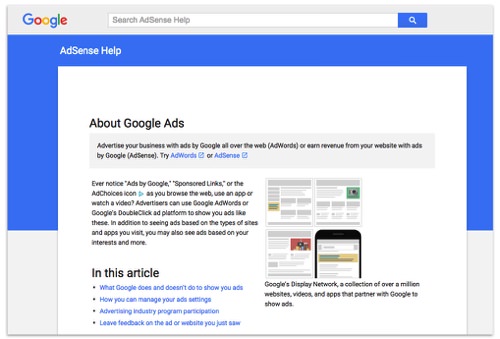

Clicking the “AdChoices” button directs users to an “About Google Ads” page. The page is currently text-heavy, offering little in the way of practical help on how to make changes to your profile, and it could be greatly improved.

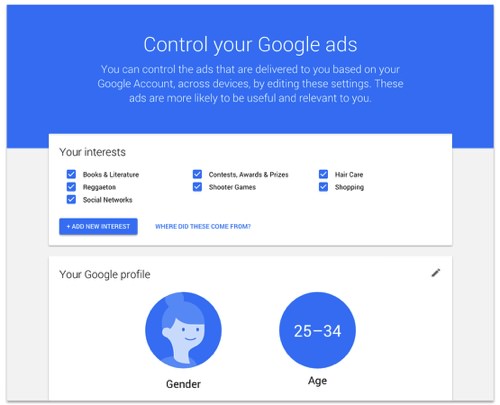

However, when users drill down past the landing page, Google provides a nicely designed and intuitive interface that allows users to control their ad preferences:

Interestingly, this page was recently redesigned, pointing to the value that Google places on customer privacy. For comparison, the previous version of the same page is below. It offered little direction on how to adjust privacy preferences and only minimal control.

While there is still room for improvement, when it comes to making it easy for customers to manage personalization preferences, Google provides a good model to follow.

Allow Customers To Take Back Their Data

It is genuinely difficult to find a good example of an organization allowing users to easily take back their data. Perhaps this lack of examples is telling.

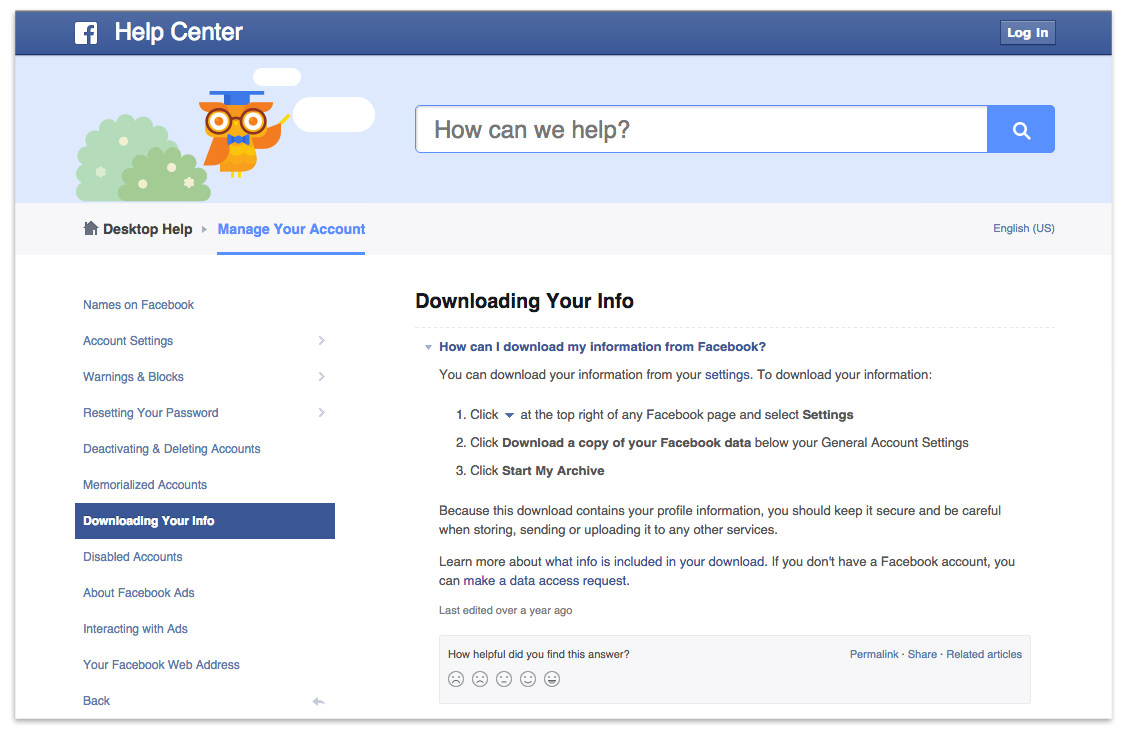

One of the largest collectors of customer data, Facebook allows users to download an archive of their data. From there, the account can be deleted. However, there is no clear language to indicate that Facebook actually deletes all data from its servers or whether it continues to maintain the user’s profile.

Were the process clearer, Facebook would almost satisfy the principle of giving ongoing control over data back to customers. But it would still have to provide an easy way to do so.

We are still far from having standardized best practices for giving customers full control over their personal information. It may be a while before it becomes common to expect greater control in this area. Maybe as digital consumers, we have grown accustomed to providing personal information without expecting businesses to grant us a means to easily maintain control. Perhaps we can influence change by increasing our expectations in this area — and asking for control.

Concluding Thoughts

CX and UX professionals are faced with the difficult task of helping clients navigate design decisions around privacy and personalization of digital services. It’s up to us to help clients understand the implications to business outcomes and ethics. To do this well, we must introduce privacy considerations into the design process as early as possible.

Clients’ customers expect that we make it easier for them to access services in ways that require personalization. However, many customers are not yet comfortable with this, raising concerns about the exchange — even though they want the benefits.

And even customers who are comfortable may be so because they don’t fully understand how their personal information is being collected and used. This puts the onus on businesses and UX professionals to adequately educate customers.

Savvy businesses will see this an opportunity, rather than a threat. It creates an opportunity for organizations to differentiate themselves and earn loyalty from customers. Privacy is a competitive advantage with a legitimate upside.

By getting informed consent, making fair trades and giving customers ongoing control of their data, businesses can build trust with their customers, provide excellent customer experiences and build ongoing brand loyalty.

Further Reading

- Driving App Engagement With Personalization Techniques

- Real-Time Data And A More Personalized Web

- Applications Of Machine Learning For Designers

- Conversational Interfaces: Where Are We Today? Where Are We Heading?

See User Testing Live

See User Testing Live

Celebrating 10 million developers

Celebrating 10 million developers Custom Web Forms for Angular, React, & Vue. Your backend.

Custom Web Forms for Angular, React, & Vue. Your backend.