A Comprehensive Guide To UX Research

(This is a sponsored article.) Before embarking upon the design phase of any project, it’s critical to undertake some research so that the decisions you make are undertaken from an informed position. In this third article of my series for Adobe XD, I’ll be focusing on the importance of undertaking user research.

Your job title might not be “design researcher”, but that doesn’t mean you shouldn’t at the very least inform yourself of your users and their needs by undertaking at least some initial scoping research before you embark upon a project. User research should be a core part of every designer’s activity and, reflecting this fact, we’ve seen a growing focus on the importance of user research over time as our discipline has matured.

It’s critical to the success of any project — be it for an external client, for an internal project, or a new product you’re building — to adopt a user-first approach that positions the people that use what we build front and center.

In this article, I’ll introduce a cross-section of research methods that will help designers to both: design new products and, as will often be the case, redesign existing products. Whether you’re designing for external clients, working as part of an in-house team, or building a digital product: user research is critical.

As you build your UX toolbox, it’s essential to equip yourself with the research tools you need to design great user experiences effectively. I’ll provide these in a section that explores the research landscape and dives deeper into the details.

Research is a vast topic so consider this article a short primer, but — as in my previous article — I’ll provide some tips and techniques on both qualitative and quantitative research (as well as defining what these methods are!), and I’ll provide some suggested reading.

User-Centred Design Requires User Research

As this section’s title summarises: user-centered design requires user research. It’s impossible to design effective and memorable user experiences if you haven’t placed your users right at the heart of your design process.

When embarking upon the design process — indeed, as you’re framing the problem you’re trying to solve — it’s important to ask:

- What do your users want to get done?

- What are their goals?

- What are they trying to achieve?

Your user research should give you some insight into the answers to these questions. It should also be one of the first things you focus on. In short: it’s critical to undertake user research right at the beginning of the project. This helps to define: the scope of the project (what exactly it is you’re doing); and the goal (what the intention is).

Start with the goal, clearly defined, and work back from that.

Great products enable customers to get jobs done, your role as a designer is to define those jobs, then design for them. In short: spend some time with your users, getting to know their needs, and what it is they are trying to achieve, these are their “jobs to be done.’

If the phrase “jobs to be done” is new to you, it’s well worth becoming better acquainted with it. I’d highly recommend bookmarking Alan Klement’s excellent Jobs to be Done website, which dives a little deeper on the subject. The team at Intercom, an excellent product, have also written a book on the topic, Intercom on Jobs to be Done.

It’s critical to focus on users first, or you’re in danger of designing based on your assumptions, which often aren’t necessarily correct. Instead of beginning with assumptions, begin with user research. Define the problem you’re trying to solve, build a prototype, test your assumptions, and iterate.

Research should be an ongoing process — it’s rarely, if ever “finished” — it’s returned to repeatedly throughout the process. Ideally, you should adopt an iterative approach towards your design:

- Research

- Design

- Prototype

- Build

- Test

It’s important to stress that this process is a loop that we run through repeatedly. By undertaking user research, we can frame a problem, design, prototype, build it, and finally, return to our users to test our assumptions.

Looping iteratively through this process leads to better results as user feedback shapes user experience. With something built — even at a functional prototype stage, using a tool like Adobe XD — it’s important to test it. The best approach is a lean approach: move quickly and design effectively, by creating a minimum viable product (MVP), testing it and iterating.

You can learn a lot through observation, watching how your users interact with your prototypes. As Yogi Berra puts it, in characteristic style: “You can observe a lot by just watching.”

In short: it’s critical not to get lost designing in a vacuum — isolated from users — as such it’s important to get out of the studio and meet users. Design without users in mind isn’t design. It can also lead to mistakes that are expensive to fix later.

It’s a mistake to think: “We can’t afford user research.” This last point — you cannot afford not to undertake user research — is critical. It’s better to undertake some research than no research.

The Research Landscape

There are a wealth of tools we can use as designers to inform our research and, as your experience of user research widens, you’ll develop the experience to know when to use which tool. As we’ll see shortly, when we explore analyzing research data, it’s important to use a mix of research methods to ensure your findings are grounded and informed from a variety of perspectives.

There are many tools and — unless you’re a bona fide design researcher — it’s impossible to be fluent in all of them. It is, however, important to start to build a research toolbox, adding methods as your knowledge expands.

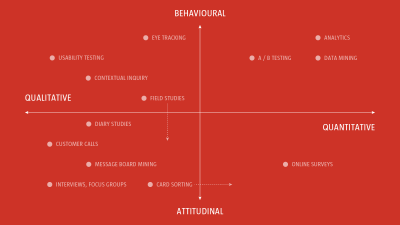

We can think of the research landscape as one with two axes:

- Qualitative and Quantitative; and

- Behavioral and Attitudinal

On one axis we have qualitative approaches, exploring opinions, quantitative approaches, and testing opinions by exploring data. On the second axis we have behavioral approaches, observing users, watching what they do; and attitudinal approaches, listening to users, exploring their attitudes and opinions.

Before I introduce some qualitative and quantitative research methods, it’s important to stress that who you test is important. Testing your designs on your studio mates won’t cut the mustard, it’s important to work with real-life humans outside of your building, preferably real-life humans that echo the users you are designing for.

When undertaking qualitative research — where you are working more closely with research participants — it’s important to put some thought into finding the right kind of people. If you’re designing a project for the elderly, for example, it’s essential to establish a panel of research participants that fit the profile of who you are designing for.

It’s critical to find the right kind of people, and building a screening document for candidates is one way of doing this. Essentially a screening document lists the characteristics of the users you’re designing for, for example whether they’re male or female, aged 65+, computer literate, travel regularly, and so on.

The bottom line? It’s important to select your research participants with care. They will shape your research findings considerably, so it’s essential to put some work into this part of the process.

Qualitative Research

Qualitative research is primarily exploratory research, undertaken to establish users’ underlying motivations and needs. It’s useful to help you shape your thinking and to establish ideas, which can then be built and tested using quantitative methods.

Broadly speaking, qualitative methods are largely unstructured, tend to be subjective, are at the softer end of science, and are about establishing insights and theories (which we then test, often using quantitative approaches). They tend to be smaller sample sizes and require a degree of hands-on facilitation. With qualitative research user behaviors and attitudes are gathered directly.

I’ll be exploring three qualitative research methods in this article: interviews, contextual inquiries, and card sorting. User testing is also particularly important. However, I’ll be exploring it in depth in a future article in this series. Until then — if you’re eager to learn a little more — I’d suggest reading Nick Babich’s 10 Simple Tips To Improve User Testing.

Interviews

Interviews are a great way to really get to the heart of your users’ needs. To facilitate interviews effectively requires a degree of empathy, some social skills, and a sense of self-awareness. If you’re not this person, find someone on your team who is.

It’s important to put your interviewees at ease; they need to feel comfortable sharing their thinking with you. If you can build a rapport with them, they’ll often open more honestly with you. One of the biggest benefits of interviews — over, for example, surveys — is that you learn not only from your interviewees’ answers but by their body language, too.

Broadly speaking interviews fall into one of two categories:

- Structured Interviews: Where the interviewer focuses on a series of structured questions, comparing the responses of the interviewee to other interviewees’ responses.

- Semi-Structured Interviews: Where the interviewer adopts a looser, more discussion-driven approach, letting the interview evolve a little more naturally.

In reality, anyone who has conducted interviews will know that that is — by nature — organic. Even if you have a predetermined structure in place, it’s important to allow some breathing room to allow the interview to evolve.

Put some thought into your research questions in advance, but allow the interviewee the latitude to move into areas that you may not have considered upfront. Interviews are a useful way to challenge your assumptions and interviewees can often lead you to unexpected discoveries and things that you perhaps weren’t aware of.

It’s important to put the interviewee at ease, ensuring they feel comfortable answering your questions. Facilitating an interview effectively isn’t easy, so it’s helpful to use another member of the team as a notetaker, this allows you to focus 100% on your interviewee.

Contextual Inquiry

Returning to Yogi Berra: “You can observe a lot by just watching.” A contextual inquiry is a form of an ethnographic interview, where users are observed and questioned in their own environment, to try to determine their approach towards specific tasks.

A contextual inquiry is focused around four key principles:

- Context: Interviews are conducted in the user’s workplace, which affords an opportunity to experience typical working conditions, existing solutions and, equally, a user’s frustrations.

- Partnership: The researcher and user work together to understand the user’s workflow and the tools they use.

- Interpretation: By sharing the researcher’s observations and insights with the user, there is an opportunity for the user to clarify or extend the researcher’s findings.

- Focus: The researcher is able to guide the user’s interactions towards aspects that are relevant to the specific project’s scope.

There are many benefits to adopting this approach because users are observed and questioned in their own workplace, a contextual inquiry affords an opportunity to get a realistic view of users’ needs and — equally — frustrations, in a day-to-day context.

Card Sorting

Card sorting is a useful research method to establish information architecture (IA), in short: deciding what goes where and ensuring your information groupings make sense to the widest possible audience. Card sorting is particularly useful if you’re working with a group of stakeholders collectively.

Card sorting involves writing words or phrases onto separate cards — hence the name — then asking your research participants to organize them. Take care to ensure your cards are shuffled so that you don’t bias your users. Ask your users, individually or collectively, to organize them into logical groupings.

Card sorting is relatively cheap; it can also be used as a helpful way to build consensus amongst stakeholders, asking them to — as a team — define groupings. By asking your users to name their groupings, you can discover words or synonyms that can be used for navigation labels.

Although there are online tools that enable you to run card sorting exercises online, observing and listening to your users’ debate groupings can give you valuable insights into how users see logical groupings.

Quantitative Research

Quantitative research is primarily undertaken to test your assumptions. It’s useful to help you shape your thinking and to establish ideas, which can then be built and tested using quantitative methods.

Broadly speaking, quantitative methods are largely: structured, tend to be objective, are at the harder — more measurable — end of science, and are about testing theories. They tend to be larger sample sizes and can be run in a more hands-off manner. With quantitative research, user behaviors and attitudes are gathered indirectly.

I’ll be exploring three quantitative research methods in this article: surveys and questionnaires, analytics and A/B testing.

Surveys And Questionnaires

Surveys and questionnaires are a powerful tool for gathering a higher volume of opinions. However, they’re generally run in a more hands-off manner. That doesn’t mean they’re not useful, but if possible focus on interviews first.

Surveys and questionnaires generally lack interaction between the interviewer and the interviewees, often being undertaken remotely. As such it can be difficult to gain the insights possible when working directly with users and observing them. Often it’s what users do that’s the most interesting discovery, not what they say.

If you’re undertaking a survey it helps to incentivize it in some way; you need to try and motivate as many users as possible to participate. Also, if it’s possible and you’re with the people you’re surveying, paper-based surveys beat digital surveys every time. People have a tendency to forget to return to digital surveys, bookmarking them in their minds for later. Paper is more immediate and, as such, leads to a greater return rate.

Spend time on your survey questions and distill them down. It’s better to ask fewer questions and increase the chance of returns than to ask endless (often irrelevant) questions and lose participants through boredom.

Lastly, the design of your survey is important and can improve completion rates. Typeform is a lovely tool that uses beautiful design to ensure even surveys are pleasurable.

Analytics

We’re fortunate now to have considerable quantities of data at our fingertips. Tools like Google Analytics — the most widely used web analytics service — let you measure website traffic and generate reports quickly and easily. Analytics, although occasionally a little overwhelming, are great for testing your assumptions.

Drawn from data, analytics can be a persuasive tool when working with business executives who, more often than not, “Want to see things in black and white.” Having easy access to numbers of unique visitors, page views, pages per visit and other metrics allows you to test your thinking once you’ve implemented your design after your qualitative research phase.

Analytics is a huge topic and one which can be hard to grasp if data and statistics aren’t your strong point. If you’re looking for a concise overview, Neil O’Donoghue’s Advanced User Research Techniques on Medium is a great place to start.

A/B Testing

A/B testing is another useful tool to test out multiple ideas to see which ones work best. Essentially a controlled experiment with two variants, A and B, this form of research allows you to test different designs effectiveness against each other.

As the name implies, two versions are compared, which are — more often than not — identical apart from a single variation that might (or might not) affect a user’s behavior. A/B tests can be useful when testing assumptions informed by your qualitative findings.

A/B testing doesn’t just have to focus on visual design; it can focus on language, too. For example, you might want to test a call to action (CTA) button with two variants of copy:

- “Start your free 30-day trial” or

- “Start my free 30-day trial”

In the above example, in a test run by Unbounce, “Changing the CTA button copy from the second person [your] to the first person [my] resulted in a 90% lift in click-through rate.”

A/B testing works well when you have large sample sizes. With a lot of traffic to a website, or a large mailing list, you can be more confident that your findings are backed by a substantial quantity of data.

Tips And Techniques

- As important as the research method you choose is who you test your thinking on. It’s helpful to use a screener to screen potential users before undertaking user research. usability.gov have some excellent resources for this.

- When developing interview questions, and surveys and questionnaires, it’s important to consider both qualitative and quantitative questions. Both are important. Qualitative questions are open-ended (“How might you improve this customer journey?”). Quantitative questions, on the other hand, tend to be yes/no (“Do you use this feature?”).

- When working with groups of research participants, it’s important to be wary of the herd mentality. An opinionated focus group participant can influence a focus group if you’re not careful. Build in systems that mitigate against this to ensure everyone has a voice. One tool that’s useful to level the playing field is the K-J Technique, which helps participants reach an objective group consensus.

When choosing your research methods it’s important to use qualitative and quantitative methods hand-in-hand, both have their place. Qualitative methods lead to insights; quantitative methods allow you to test those insights.

The tool you choose will be informed by what you are trying to achieve, but — above all — ensure you’re undertaking some user research to kick off the design process so that you’re starting from an informed position.

Analyzing Research Findings

Finally, it’s important to put all this research to good use! There’s not much point in doing user research if we don’t undertake some good, old-fashioned analysis.

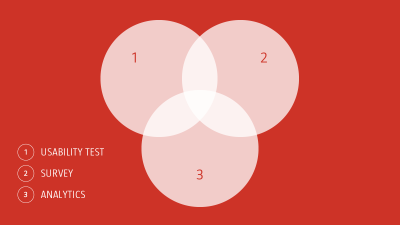

With a number of research methods used, it’s important to triangulate your findings, looking for correlations and patterns. Your aim is to see if any findings arise that are confirmed by your different research methods so that you can implement these findings.

Triangulation is the process of using multiple research points from multiple methods to increase your confidence in your research and assumptions. The more data points we use, the more confident we can be in our assumptions.

The more data points we use, the more confident we can be in our assumptions. This is why it’s essential to:

- Run different kinds of user tests, to test different assumptions; and

- Run these with multiple users.

Different research methods have different strengths, lending themselves to different scenarios. Different users respond in different ways, offering different opinions. Ideally, you need a healthy mix of different research methods and different test subjects to cover all the bases.

In short, your research findings are just the beginning of the story. With these findings in hand, it’s important to triangulate the data and see what patterns emerge. With these patterns defined, you can embark upon your design and prototyping with better-informed assumptions. Win!

In Closing

Design research is by no means a new phenomenon. As our discipline has matured, however, we’ve seen the importance of design research and, particularly, user-centred research grow in importance. The history of design research stretches back to the late twentieth century when it was formalized.

Bruce Archer (1922-2005), a Professor of Design Research at the Royal College of Art in London, was a pioneer who championed research in design and helped to establish design as an academic discipline. As he summarized it succinctly:

“Design research is systematic enquiry.”

Archer trained a generation of design researchers at the Royal College. By stressing the need for well-founded evidence and systematic analysis, he helped to map over the principles of scholarly research (largely drawn from dusty the world of academia), applying them to the field of design.

Archer stressed the importance of method and rigor, for findings to be documented so that they could, if necessary, be defended. This approach might sound commonplace to us today, but Archer’s ideas were, in their time, radical and controversial, not least within an art school.

Archer’s work was essential: it established the need to approach design in a systematic manner, informed by the needs of users, identifying these needs through systematic enquiry.

This understanding — that design should be informed by user needs and that user research is a route towards understanding — has changed user experience thinking for the better. Our goal, above all, is to inform the design process from the perspective of our users, not from the perspective of our assumptions.

Seeing things through our users’ eyes is the surest path to delivering a better and more memorable experience, and user research is how we find that path.

Suggested Reading

There are many great publications, offline and online, that will help you on your adventure. I’ve included a few below to start you on your journey.

If you’re just starting out, Erika Hall’s excellent book Just Enough Research, on A Book Apart, is a must-buy and must-read. It’s on the Required Reading list on our Interaction Design programme at Belfast School of Art and is a very good introduction to user research.

Nielsen Norman Group’s UX Research Cheat Sheet is an excellent overview of different research methods that can be used at different points in the design process. It’s well worth a bookmark.

Lastly, usability.gov is (like GOV.UK) an incredibly useful resource. The site’s User Research Basics resources are excellent, providing detailed breakdowns of different tools, like Card Sorting, and how and when to use them.

This article is part of the UX design series sponsored by Adobe. Adobe XD is made for a fast and fluid UX design process, as it lets you go from idea to prototype faster. Design, prototype, and share — all in one app. You can check out more inspiring projects created with Adobe XD on Behance, and also text sign up for the Adobe experience design newsletter to stay updated and informed on the latest trends and insights for UX/UI design.

Further Reading

- When Words Cannot Describe: Designing For AI Beyond Conversational Interfaces

- How Accessibility Standards Can Empower Better Chart Visual Design

- Creating And Maintaining A Voice Of Customer Program

- Getting Started With Neon Branching