Automating Website Deployments Through Buddy

(This is a sponsored article.) Managing the deployment of a website used to be easy: It simply involved uploading files to the server through FTP and you were pretty much done. But those days are gone: Websites have gotten very complex, involving many tools and technologies in their stacks.

Nowadays, a typical web project may require to execute build tools to compress assets and generate the deliverable files for production, upload the assets to a CDN and invalidate stale ones, execute a test suite to make sure the code has no errors (for both client and server-side code), do database migrations (and, to be on the safe side, first execute a backup of the database), instantiate the desired number of servers behind a load balancer and deploy the application to them (through an atomic deployment, so that the website is always available), download and install the dependencies, deploy serverless functions, and finally notify the team that everything is ready through Slack or by email.

All this process sounds like a bit too much, right? Well, it actually is too much. How can we avoid getting overwhelmed by the complexity of the task at hand? The solution boils down to a single word: Automation. By automating all the tasks to execute, we will not dread doing the deployment (and having a trembling sweaty finger when pressing the Enter button), indeed we may not be even aware of it.

Automation improves the quality of our work, since we can avoid having to manually execute mind-numbing tasks again and again, which will enable us to use all our time for coding, and reassures us that the deployment will not fail due to human errors (such as overriding the wrong folder as in the old FTP days).

Introduction To Continuous Integration, Delivery, And Deployment

Managing and automating software deployment involves both tools and processes. In particular, Git as the version control system where to store our source code, and the availability of Git-hosting services (such as GitHub, GitLab and BitBucket) which trigger events when new code is pushed into the repository, enable to benefit from the following processes:

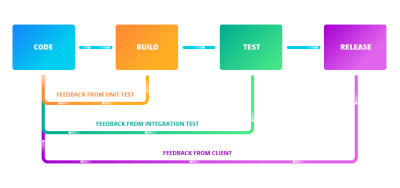

- Continuous Integration

The strategy of merging changes in the code into the main branch as often as possible, upon which automated tests against a build of the codebase are run to validate that the new code doesn’t introduce errors; - Continuous Delivery

An extension to Continuous Integration which also automates the release process, enabling to deploy the project into production at any moment; - Continuous Deployment

An extension to Continuous Delivery which automatically deploys the new code whenever it passes all required tests (as small a change it may contain), enabling to easily identify the source of any problem that might arise, and removing pressure off the team as it doesn’t need to deal with a "release day" anymore.

Adhering to these strategies has several benefits. The most immediate one is that our product can ship new features faster, indeed they can go live as soon as the team has finished coding them. The team can also receive feedback immediately (either from team members on a development environment, from the client on a staging environment, and from the users after it goes live) and be able to react straight away, thus creating a positive feedback loop. And because the whole process is fully automated, the team can save time and focus on the code, thus improving the quality of the product.

Introducing Buddy, A Tool For Automating Software Deployment

The popularity of Git has given rise to a new generation of tools to manage the complexity of software deployments. Buddy is one of these new tools, born with the goal of making it easy to implement Continuous Integration/Delivery/Deployment, while broadening the number of features our application can provide, improving its quality, and reducing its costs by allowing to incorporate the offerings of the best or cheapest cloud-based service providers (among them AWS, DigitalOcean, Google Cloud Platform, Cloudflare, Rackspace, Azure, and others) into our stacks. This way, for instance, our application can be hosted on GitHub, be protected from DDoS through Cloudflare, have its static files hosted through DigitalOcean, use serverless functions from AWS Lambda, and authenticate users through Firebase, and everything is handled seamlessly.

Buddy operates through the use of pipelines: Sets of actions defined by the developer in a specific order, executed either manually or automatically when executing a Git push, that deliver the application from a Git repository to wherever needed and transforming it as required. Pipelines are extremely flexible, enabling developers to add only the required actions and have them customized for their specific needs.

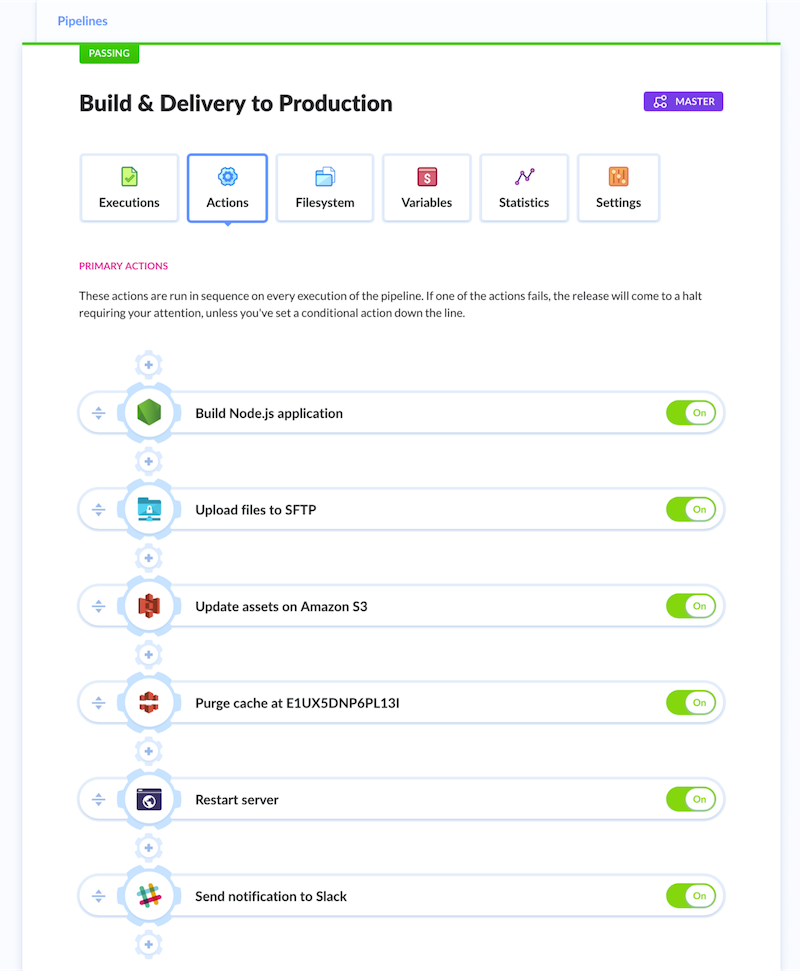

For instance, the following pipeline performs all required tasks to deploy some Node.js application: execute the build step, upload files to the server through SFTP, upload assets to AWS S3 and purge them from the CDN, restart the server and finally inform the team through Slack (as it can be appreciated in the image below, the pipeline can be self-explanatory):

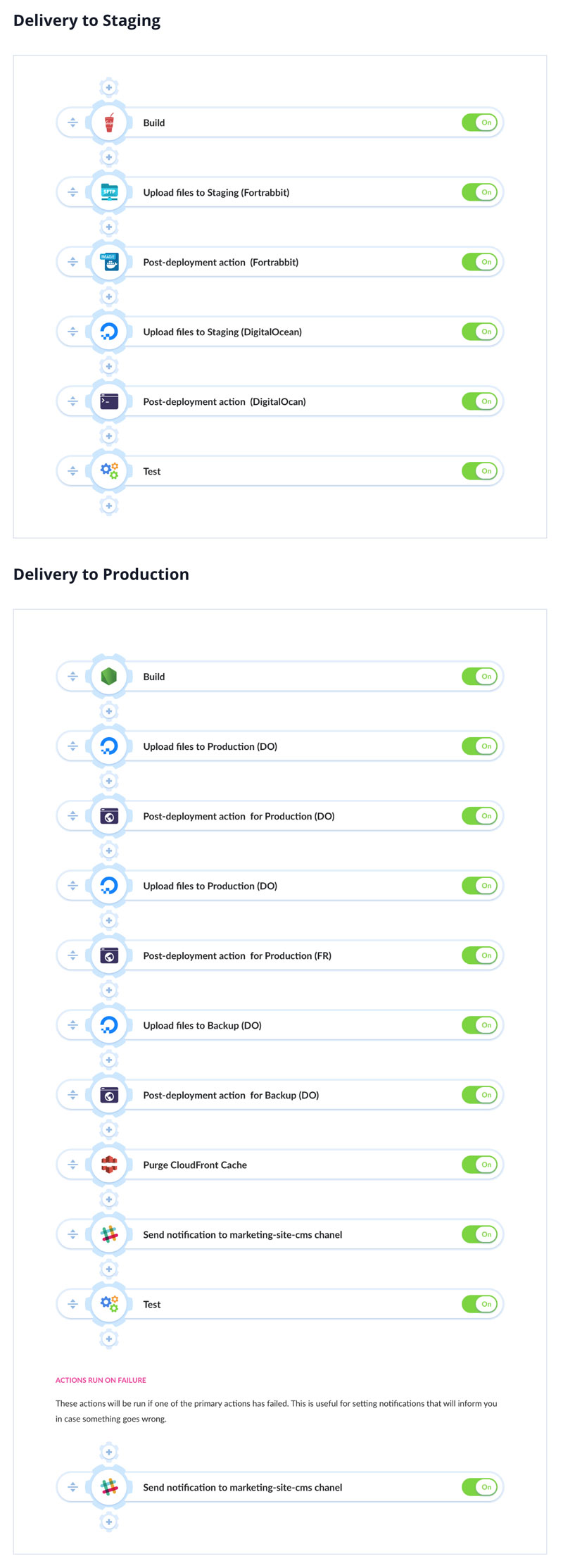

We can create different pipelines for different environments, and execute special actions when the process fails (such as when a test was not successful when the server to deploy to is down, or others). For instance, the following pipeline (to deploy a Node.js & PHP app that uses DigitalOcean, Fortrabbit & AWS CloudFront for hosting) makes a backup of assets and purges the CDN only when deploying to production, and sends a notification to the team through Slack in case of failure:

A noteworthy effect of configuring our pipelines with actions from different cloud-service providers is that we can conveniently switch among them whenever the need arises, making it easy to avoid vendor lock-in (this includes also changing the repository provider). Buddy offers slightly over 100 actions out of the box, and also allows developers to create and use their own actions. This image shows all the readily available actions:

Creating A Pipeline

Let’s see how to create a simple pipeline to test and deploy a Node.js application, and send a notification to the team. The first step is to create a new project, during which you will be asked to select the project’s hosting provider (from among GitHub, GitLab, Bitbucket, Buddy Git Hosting, and your private Git server), and then to select the repository:

Then we can create the pipeline, specifying when it must run (either manually, automatically after new code is pushed to the repository, or automatically every x amount of time) and from which branch:

Then we can add actions to the pipeline. For that, we simply click on the “+” button to add a new action, upon which we must configure it as needed. To build and test a Node.js application we add and configure a “Node.js” action:

After testing the application, we can deploy it by uploading it to our production server through SFTP. For this, we add an “SFTP” action, and configure it through custom-defined environment variables ${SFTP} and ${SFTP_USER}:

Finally, we send an email to the team with the results of the execution. For this, we add and configure the “Email” action:

That’s it. Our pipeline will look like this:

From this moment on, if the pipeline was configured to run when new code is pushed to the repository, doing a git push will trigger the execution of the pipeline.

Staying Constantly Up To Date

Web development is in a never-ending state of flux, with new tools and services being launched without a break, some of them often becoming a hot trend immediately and the new normal barely a few months later. Technologies seldom heard of a few years ago progressively gain importance and eventually become a must (voice search, machine learning, WebAssembly), new frameworks and libraries offer new ways of building sites (GraphQL, Gatsby, Next.js, Nuxt.js), and our applications need to be accessed from newly-invented devices (Amazon Echo, In-car systems). To keep our applications relevant, we must continuously evaluate the latest offerings and decide if to add them to our technology stack. Hence, it is extremely important that our platforms for developing the application do not restrict what technologies we can use.

Buddy deals with this issue by continuously collecting feedback from its users about what they need (through user polls, their forum, communication channels, and tweets), and its team strives to deliver the required features. The Buddy blog provides a glimpse of the intense pace of development: For instance, in the last few months they implemented features for building static apps and websites with Gatsby, deploying to UpCloud and to Google Cloud Functions, triggering pipelines with webhooks, integrating with Firebase, building and running Docker containers on AWS ECS, and many others.

Conclusion

Automation has become a must to avoid being overwhelmed by the complexity of modern website deployment. We can make use of Continuous Integration/Delivery/Deployment (readily feasible by hosting our source code through Git) to shorten the time needed for delivering new features into our applications and getting feedback from the users.

Buddy helps in this task, enabling developers to create pipelines to execute actions concerning a wide array of technologies and cloud-service providers, and combining these actions in any possible way to satisfy the most particular needs.

You can check out Buddy for free for 2 weeks and, if you need to host your data, you can also install it on your own premises.

Further Reading

- How To Hack Your Google Lighthouse Scores In 2024

- What Are CSS Container Style Queries Good For?

- Useful Email Newsletters For Designers

- The Modern Guide For Making CSS Shapes