The State Of Web Workers In 2021

I’m weary of always comparing the web to so-called “native” platforms like Android and iOS. The web is streaming, meaning it has none of the resources locally available when you open an app for the first time. This is such a fundamental difference, that many architectural choices from native platforms don’t easily apply to the web — if at all.

But regardless of where you look, multithreading is used everywhere. iOS empowers developers to easily parallelize code using Grand Central Dispatch, Android does this via their new, unified task scheduler WorkManager and game engines like Unity have job systems. The reason for any of these platforms to not only support multithreading, but making it as easy as possible is always the same: Ensure your app feels great.

In this article I’ll outline my mental model why multithreading is important on the web, I’ll give you an introduction to the primitives that we as developers have at our disposal, and I’ll talk a bit about architectures that make it easy to adopt multithreading, even incrementally.

The Problem Of Unpredictable Performance

The goal is to keep your app smooth and responsive. Smooth means having a steady and sufficiently high frame rate. Responsive means that the UI responds to user interactions with minimal delay. Both of these are key factors in making your app feel polished and high-quality.

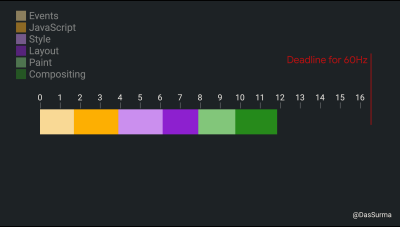

According to RAIL, being responsive means reacting to a user’s action in under 100ms, and being smooth means shipping a stable 60 frames per second (fps) when anything on the screen is moving. Consequently, we as developers have 1000ms/60 = 16.6ms to produce each frame, which is also called the “frame budget”.

I say “we”, but it’s really the browser that has 16.6ms to do everything required to render a frame. Us developers are only directly responsible for one part of the workload that the browser has to deal with. That work consists of (but is not limited to):

- Detecting which element the user may or may not have tapped;

- firing the corresponding events;

- running associated JavaScript event handlers;

- calculating styles;

- doing layout;

- painting layers;

- and compositing those layers into the final image the user sees on screen;

- (and more …)

Quite a lot of work.

At the same time, we have a widening performance gap. The top-tier flagship phones are getting faster with every new generation that’s released. Low-end phones on the other hand are getting cheaper, making the mobile internet accessible to demographics that previously maybe couldn’t afford it. In terms for performance, these phones have plateaued at the performance of a 2012 iPhone.

Applications built for the Web are expected to run on devices that fall anywhere on this broad performance spectrum. How long your piece of JavaScript takes to finish depends on how fast the device is that your code is running on. Not only that, but the duration of the other browser tasks like layout and paint are also affected by the device’s performance characteristics. What takes 0.5ms on a modern iPhone might take 10ms on a Nokia 2. The performance of the user’s device is completely unpredictable.

Note: RAIL has been a guiding framework for 6 years now. It’s important to note that 60fps is really a placeholder value for whatever the native refresh rate of the user’s display is. For example, some of the newer pixel phones have a 90Hz screen and the iPad Pro has a 120Hz screen, reducing the frame budget to 11.1ms and 8.3ms respectively.

To complicate things further, there is no good way to determine the refresh rate of the device that your app is running on apart from measuring the amount of time that elapses between requestAnimationFrame() callbacks.*

JavaScript

JavaScript was designed to run in lock-step with the browser’s main rendering loop. Pretty much every web app out there relies on this model. The drawback of that design is that a small amount of slow JavaScript code can prevent the browser’s rendering loop from continuing. They are in lockstep: if one doesn’t finish, the other can’t continue. To allow longer-running tasks to be integrated into JavaScript, an asynchronicity model was established on the basis of callbacks and later promises.

To keep your app smooth, you need to make sure that your JavaScript code combined with the other tasks the browser has to do (styles, layout, paint,…) doesn’t add up to a duration longer than the device’s frame budget. To keep your app responsive, you need to make sure that any given event handler doesn’t take longer than 100ms in order for it to show a change on the device’s screen. Achieving this on your own device during development can be hard, but achieving this on every device your app could possibly run on can seem impossible.

The usual advice here is to “chunk your code” or its sibling phrasing “yield to the browser”. The underlying principle is the same: To give the browser a chance to ship the next frame you break up the work your code is doing into smaller chunks, and pass control back to the browser to allow it to do work in-between those chunks.

There are multiple ways to yield to the browser, and none of them are great. A recently-proposed task scheduler API aims to expose this functionality directly. However, even if we had an API for yielding like await yieldToBrowser() (or something of the sort), the technique itself is flawed: To make sure you don’t blow through your frame budget, you need to do work in small enough chunks that your code yields at least once every frame.

At the same time, code that yields too often can cause the overhead of scheduling tasks to become a net-negative influence on your app’s overall performance. Now combine that with the unpredictable performance of devices, and we have to arrive at the conclusion that there is no correct chunk size that fits all devices. This is especially problematic when trying to “chunk” UI work, since yielding to the browser can render partially complete interfaces that increase the total cost of layout and paint.

Web Workers

There is a way to break from running in lock-step with the browser’s rendering thread. We can move some of our code to a different thread. Once in a different thread, we can block at our heart’s desire with long-running JavaScript, without the complexity and cost of chunking and yielding, and the rendering thread won’t even be aware of it. The primitive to do that on the web is called a web worker. A web worker can be constructed by passing in the path to a separate JavaScript file that will be loaded and run in this newly created thread:

const worker = new Worker("./worker.js");Before we get more into that, it’s important to note that Web Workers, Service Workers and Worklets are similar, but ultimately different things for different purposes:

- In this article, I am exclusively talking about WebWorkers (often just “Worker” for short). A worker is an isolated JavaScript scope running in a separate thread. It is spawned (and owned) by a page.

- A ServiceWorker is a short-lived, isolated JavaScript scope running in a separate thread, functioning as a proxy for every network request originating from pages of the same origin. First and foremost, this allows you to implement arbitrarily complex caching behavior, but it has also been extended to let you tap into long-running background fetches, push notifications, and other functionality that requires code to run without an associated page. It is a lot like a Web Worker, but with a specific purpose and additional constraints.

- A Worklet is an isolated JavaScript scope with a severely limited API that may or may not run on a separate thread. The point of worklets is that browsers can move worklets around between threads. AudioWorklet, CSS Painting API and Animation Worklet are examples of Worklets.

- A SharedWorker is a special Web Worker, in that multiple tabs or windows of the same origin can reference the same SharedWorker. The API is pretty much impossible to polyfill and has only ever been implemented in Blink, so I won’t be paying any attention to it in this article.

As JavaScript was designed to run in lock-step with the browser, many of the APIs exposed to JavaScript are not thread-safe, as there was no concurrency to deal with. For a data structure to be thread-safe means that it can be accessed and manipulated by multiple threads in parallel without its state being corrupted.

This is usually achieved by mutexes which lock out other threads while one thread is doing manipulations. Not having to deal with locks allows browsers and JavaScript engines to make a lot of optimizations to run your code faster. On the other hand, it forces a worker to run in a completely isolated JavaScript scope, since any form of data sharing would result in problems due to the lack of thread-safety.

While Workers are the “thread” primitive of the web, they are very different from the threads you might be used to from C++, Java & co. The biggest difference is that the required isolation means workers don’t have access to any variables or code from the page that created them or vice versa. The only way to exchange data is through message-passing via an API called postMessage, which will copy the message payload and trigger a message event on the receiving end. This also means that Workers don’t have access to the DOM, making UI updates from a worker impossible — at least without significant effort (like AMP’s worker-dom).

Support for Web Workers is nearly universal, considering that even IE10 supported them. Their usage, on the other hand, is still relatively low, and I think to a large extent that is due to the unusual ergonomics of Workers.

JavaScript’s Concurrency Models

Any app that wants to make use of Workers has to adapt its architecture to accommodate the requirements of Workers. JavaScript actually supports two very different concurrency models often grouped under the term “Off-Main-Thread Architecture”. Both use Workers, but in very different ways and each bringing their own set of tradeoffs. Any given app usually ends somewhere in between these two extremes.

Concurrency Model #1: Actors

My personal preference is to think of Workers like Actors, as they are described in the Actor Model. The Actor Model’s most popular incarnation is probably in the programming language Erlang. Each actor may or may not run on a separate thread and fully owns the data it is operating on. No other thread can access it, making rendering synchronization mechanisms like mutexes unnecessary. Actors can only send messages to each other and react to the messages they receive.

As an example, I often think of the main thread as the actor that owns the DOM and consequently all the UI. It is responsible for updating the UI and capturing input events. Another factor could be in charge of the app’s state. The DOM actor converts low-level input events into app-level semantic events and sends them to the state actor. The state actor changes the state object according to the event it has received, potentially using a state machine or even involving other actors. Once the state object is updated, it sends a copy of the updated state object to the DOM actor. The DOM actor now updates the DOM according to the new state object. Paul Lewis and I once explored actor-centric app architecture at Chrome Dev Summit 2018.

Of course, this model doesn’t come without its problems. For example, every message you send needs to be copied. How long that takes not only depends on the size of the message but also on the device the app is running on. In my experience, postMessage is usually “fast enough”, but there are certain scenarios where it isn’t. Another problem is to strike the balance between moving code to a worker to free up the main thread, while at the same time having to pay the cost of communication overhead and the worker being busy with running other code before it can respond to your message. If done without care, workers can negatively affect UI responsiveness.

The messages you can send via postMessage are quite complex. The underlying algorithm (called “structured clone”) can handle circular data structures and even Map and Set. It cannot handle functions or classes, however, as code can’t be shared across scopes in JavaScript. Somewhat irritatingly, trying to postMessage a function will throw an error, while a class will just be silently converted to a plain JavaScript object, losing the methods in the process (the details behind this make sense but would blow the scope of this article).

Additionally, postMessage is a fire-and-forget messaging mechanism with no built-in understanding of request and response. If you want to employ a request/response mechanism (and in my experience most app architectures inevitably lead you there), you’ll have to build it yourself. That’s why I wrote Comlink, which is a library that uses an RPC protocol under the hood to make it seem like the objects from a worker are accessible from the main thread and vice versa. When using Comlink, you don’t have to deal with postMessage at all. The only artifact is that due to the asynchronous nature of postMessage, functions don’t return their result, but a promise for it instead. In my opinion, this gives you the best of the Actor Model and Shared Memory Concurrency.

Comlink is not magic, it still has to use postMessage for the RPC protocol. If your app ends up being one of the rarer cases where postMessage is a bottleneck, it’s useful to know that ArrayBuffers can be transferred. Transferring an ArrayBuffer is near-instant and involves a proper transferral of ownership: The sending JavaScript scope loses access to the data in the process. I used this trick when I was experimenting with running the physics simulations of a WebVR app off the main thread.

Concurrency Model #2: Shared Memory

As I mentioned above, the traditional approach to threading is based on shared memory. This approach isn’t viable in JavaScript as pretty much all APIs have been built with the assumption that there is no concurrent access to objects. Changing that now would either break the web or incur a significant performance cost because of the synchronization that is now necessary. Instead, the concept of shared memory has been limited to one dedicated type: SharedArrayBuffer (or SAB for short).

A SAB, like an ArrayBuffer, is a linear chunk of memory that can be manipulated using Typed Arrays or DataViews. If a SAB is sent via postMessage, the other end does not receive a copy of the data, but a handle to the exact same memory chunk. Every change done by one thread is visible to all other threads. To allow you to build your own mutexes and other concurrent data structures, Atomics provide all sorts of utilities for atomic operations or thread-safe waiting mechanisms.

The drawbacks of this approach come in multiple flavors. First and foremost, it is just a chunk of memory. It is a very low-level primitive, giving you lots of flexibility and power at the cost of increased engineering efforts and maintenance. You also have no direct way of working on your familiar JavaScript objects and arrays. It’s just a series of bytes.

As an experimental way to improve ergonomics here, I wrote a library called buffer-backed-object that synthesizes JavaScript objects that persist their values to an underlying buffer. Alternatively, WebAssembly makes use of Workers and SharedArrayBuffers to support the threading model of C++ and other languages. I’d say WebAssembly currently offers the best experience for shared-memory concurrency, but also requires you to leave a lot of the benefits (and comfort) of JavaScript behind and buy into another language and (usually) bigger binaries produced.

Case-Study: PROXX

In 2019, my team and I published PROXX, a web-based Minesweeper clone that was specifically targeting feature phones. Feature phones have a small resolution, usually no touch interface, an underpowered CPU, and no proper GPU to speak of. Despite all these limitations, they are increasingly popular as they are sold for an incredibly low price and they include a full-fledged web browser. This opens up the mobile web to demographics that previously couldn’t afford it.

To make sure that the game was responsive and smooth even on these phones, we embraced an Actor-like architecture. The main thread is responsible for rendering the DOM (via preact and, if available, WebGL) and capturing UI events. The entire app state and game logic is running in a worker which determines whether you just stepped on a mine black hole and, if not, how much of the game board to reveal. The game logic even sends intermediate results to the UI thread to give the user a continuous visual update.

Other Benefits

I have talked about the importance of smoothness and responsiveness and how workers help you achieve these goals more easily. Something that has only been explored at the surface is that Web Workers can also help your app consume less of your user’s battery. By making use of more CPU cores in parallel, the CPU might be able to use “high performance” mode more sparingly, consuming less power overall. David Rousset from Microsoft did an exploration into power consumption of web apps.

Adopting Web Workers

If you made it here, you hopefully have a better understanding of why workers can be useful. Now the next obvious question is: How?

Workers have not seen large adoption, so there also isn’t a lot of experience and architecture around workers. It can be hard to tell ahead of time which parts of your code are worth moving to a worker. Rather than advocating for one specific architecture over another, I have made good experience with an approach that allows incremental adoption of workers:

Most of us already build our apps by using modules and the basic primitive, as it is what most bundlers use to perform bundling and code splitting. The main trick is to be strict about separating your UI code from the pure computation parts. This will reduce the number of modules that make use of the main-thread-only API like the DOM and as a result can do their work in a worker.

Additionally, try to rely on synchronicity as little as possible, allowing you to adopt asynchronous patterns like callbacks and async/await later on with ease. With this in place, you can move modules from the main thread to a worker using Comlink and measure to see if it impacts your performance positively or negatively.

For adopting workers in an existing code base, things can be a bit trickier. Invest some time to critically analyze which parts of your code need to depend on the DOM or other main-thread-only APIs. If possible, remove these dependencies through refactoring and incrementally adopt the model above.

In either case, the key part is to make the impact of off-main-thread architecture measurable. Don’t assume (or guess) that something is faster or slower once in a worker. Browsers sometimes work in mysterious ways where something that seems like an optimization has the opposite effect. It is important to get numbers to make informed decisions!

Web Workers And Bundlers

Most modern web development environments make use of bundlers to significantly improve loading performance. Bundlers achieve this by, well, bundling multiple JavaScript modules into one file. With Workers, however, we need the file to remain separate as the Worker constructor dictates. Instead of wrestling bundlers to do what is needed, I often see people resorting to encoding their worker code into Data URLs or Blob URLs. Both approaches bring significant problems with them: While Data URLs don’t work in Safari at all, Blob URLs do work but have no concept of origin or path, meaning that path resolution and fetches will not work as expected. This is another hurdle in the adoption of workers, but more recent releases of popular bundlers have gotten better at handling workers:

- Webpack

For Webpack v4, the worker-loader plugin made Webpack understand Workers. Since Webpack v5 Webpack understands the Worker constructor automatically and can even share modules between the main thread and worker to avoid double-loading. - Rollup

For Rollup, I wrote rollup-plugin-off-main-thread, which should make workers work out-of-the-box. - Parcel

Parcel deserves a special shout-out as both v1 and v2 support Workers out of the box with no additional configuration.

With all of these bundlers, it’s common to develop your app using ES Modules. However, that in itself brings another problem.

Web Workers And ES Modules

All modern browsers support running JavaScript modules via <script type="module" src="file.js">. All modern browsers apart from Firefox also now support the worker counterpart: new Worker("./worker.js", {type: "module"}). Safari’s support is fairly recent, so it’s important to think about how to support slightly older browsers. Luckily, all bundlers (with the plugins above) will make sure your module code runs in workers, even if the browser does not have module support. In that sense, using a bundler could be seen as a polyfill for Module Workers.

The Future

I like the Actor Model. But the ergonomics of concurrent JavaScript are not great overall. A lot of tooling was built and library code was written to make it better, but in the end JavaScript The Language needs to do better here. Some engineers at TC39 have taken a liking to this topic and are trying to figure out how JavaScript can support both concurrency models better. Multiple proposals are being evaluated, from allowing code to be postMessage’d, to having objects be shared across threads to higher-level, scheduler-like APIs as they are common on native platforms.

None of them have reached a significant stage in the standardization process just yet, so I won’t spend time on them here. If you are curious, keep an eye out on the TC39 proposals and see what the next generation of JavaScript holds.

Summary

Workers are a key tool to keep the main thread responsive and smooth by preventing any accidentally long-running code from blocking the browser to render. Due to the inherent asynchronous nature of communicating with a worker, the adoption of workers requires some architectural adjustments in your web app, but as a pay off you will have an easier time supporting the massive spectrum of devices that the web is accessed from.

You should make sure to adopt an architecture that lets you move code around easily so you can measure the performance impact of off-main-thread architecture. The ergonomics of web workers have a bit of a learning curve but the most complicated parts can be abstracted away with libraries like Comlink.

Further Resources

- “The Main Thread Is Overworked And Underpaid,” Surma, Chrome Dev Summit 2019 (Video)

- “Green Energy Efficient Progressive Web Apps,” David, Microsoft DevBlogs

- “Case Study: Moving A Three.js-Based WebXR App Off-Main-Thread,” Surma

- “When Should You Be Using Workers?,” Surma

- “Is

postMessageSlow?,” Surma - “Comlink,” GoogleChromeLabs

- “

web-worker,” npm

FAQ

There are some questions and thoughts that are raised quite often, so I wanted to preempt them and record my answer here.

Isn’t postMessage Slow?

My core advice in all matters of performance is: Measure first! Nothing is slow (or fast) until you measure. In my experience, however, postMessage is usually “fast enough”. As a rule of thumb: If JSON.stringify(messagePayload) is under 10KB, you are at virtually no risk of creating a long frame, even on the slowest of phones. If postMessage does indeed end up being a bottleneck in your app, consider the following techniques:

- Breaking your work into smaller pieces so that you can send smaller messages.

- If the message is a state object of which only small parts have changed, send patches (diffs) instead of the whole object.

- If you send a lot of messages, it can also be beneficial to batch multiple messages into one.

- As a last resort, you can try switching to a numerical representation of your message and transferring an ArrayBuffers instead of sending an object-based message.

Which of these techniques is the right one depends on the context and can only be answered by measuring and isolating the bottleneck.

I Want DOM Access From The Worker.

This one I get a lot. In most scenarios, however, that just moves the problem. You are at risk of effectively creating a 2nd main thread, with all the same problems, just in a different thread. Making the DOM safe to access from multiple threads would require adding locks which would introduce a slowdown to DOM operations. This would probably hurt a lot of existing web apps.

Additionally, the lock-step model has benefits. It gives the browser a clear signal at what time the DOM is in a valid state that can be rendered to the screen. In a multi-threaded DOM world, that signal would be lost and we’d have to deal with partial renders or other artifacts.

I Really Dislike Having To Put Code In A Separate File For Workers.

I agree. There are proposals being evaluated in TC39 to inline a module into another module without all the trip-wires that Data URLs and Blob URLs have. These proposals would also allow you to create a worker without the need for a separate file. So while I don’t have a satisfying solution right now, a future iteration of JavaScript will most certainly remove this requirement.

Further Reading

- How To Hack Your Google Lighthouse Scores In 2024

- Sticky Headers And Full-Height Elements: A Tricky Combination

- Best Of Pro Scheduler Libraries

- Uniting Web And Native Apps With 4 Unknown JavaScript APIs